Field Notes from Carbon Five

A blog to help you navigate the world of product management, engineering, and design.

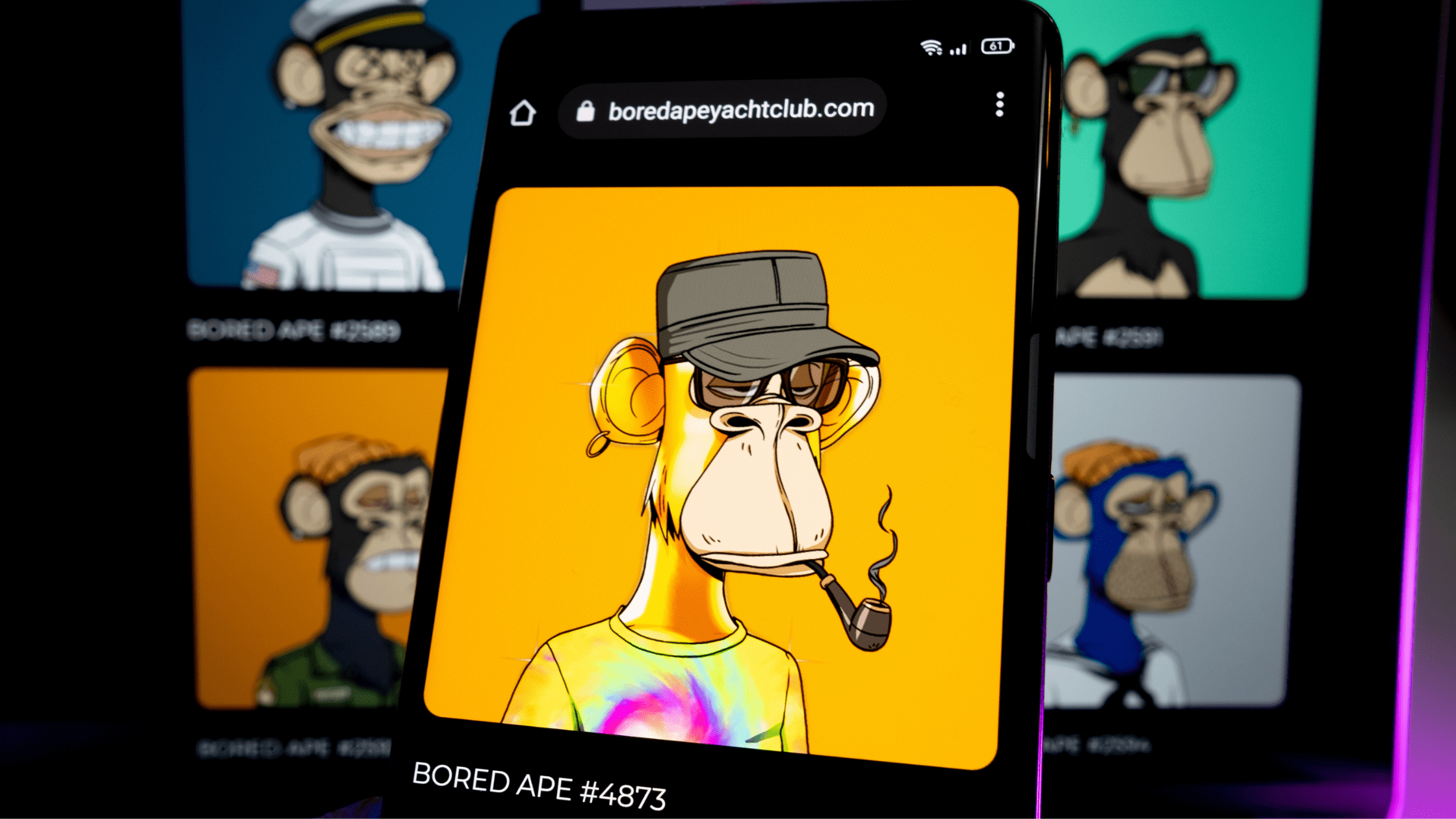

What You Need To Know About Non-Fungible Tokens (NFTs)

If you’ve been on the internet in the past several months, you’ve probably heard the acronym “NFT.” Or maybe you’ve seen some drawings of monkeys wearing clothes, or heard the term “CryptoKitties.” So what are these? They are part of a relatively new collectable market coming out of blockchains. NFT stands for non-fungible token, which …

New Product Designers: Here’s How to Craft a Successful Portfolio in 2022

It’s safe to say that a portfolio is one of the most important means to landing your first design job. Yet the work to build a portfolio is arduous for designers at any level. Gathering artifacts and documentation from past projects is time-consuming and often reveals disparities in depth, breadth, and overall quality from project …

West Monroe Acquires Carbon Five to Continue Growing Digital Product Development Solutions

WEDNESDAY, DEC. 1 — Today, West Monroe announced it has acquired Carbon Five, a national product management, digital design, and software engineering firm. The acquisition is part of West Monroe’s strategy to expand its end-to-end digital services in response to unprecedented market demand and comes on the heels of acquiring Verys, a 200-person product development …

Take Command of Your Ruby Code with the Command Pattern

It’s no secret that Ruby trivializes many classic design patterns. What might take 30 lines in Java can often be a one-liner in Ruby. But there’s more to learning a design pattern than reading some pseudo code about Widgets and AbstractFlyweightDecoratorFactory classes. The Command pattern is an abstraction that will be familiar to most experienced …

Why You Shouldn’t Sleep on Ruby on Rails in 2022

First released to the public in 2004, Ruby on Rails is an open source web-application framework written in the Ruby programming language. We sat down with Carbon Five Director of Engineering Matt Brictson to discuss all things Ruby on Rails, including the benefits of using Rails versus an all-Javascript stack, what’s in store for the …

Carbon Five Roll Call: Isai Lopez Rodas, Software Engineer

Carbon Five Roll Call is a blog series introducing you to our team of product managers, designers, and software engineers at Carbon Five. Meet the team and learn how we can help support your next project. This week, we’d like to introduce you to Isai! Name: Isai Lopez Rodas Title: Software Engineer Hometown: Petaluma, CA …

How to Run a Great Retrospective: A Detailed Recipe

You’ve read all the agile docs about how important it is to have a regular reflection meeting. You’ve sat through so many retros that you’ve lost count—and maybe you’ve even run more than a few yourself. But, for some reason, your retrospectives just aren’t as magical as the ones you read about. You’re not alone! …

Considering Intersectionality in the Tech Community at Large

Welcome to our final post (for now) in the series on intersectionality in the tech industry. Before now, we were focused on how we at Carbon Five might improve at building products with an intersectional lens. For those unfamiliar with the term, intersectionality is defined by Merriam Webster as “the complex, cumulative way in which …

Carbon Five Roll Call: Ifeanyi Iyke-Azubogu, Software Engineer Intern

Carbon Five Roll Call is a blog series introducing you to our team of product managers, designers, and software engineers at Carbon Five. Meet the team and learn how we can help support your next project. Now introducing, Ifeanyi! Name: Ifeanyi Iyke-Azubogu Title: Software Engineer Intern Hometown: Baltimore, MD College/Universtiy: University of Maryland College Park Emoji of choice: ⏳ …

#C5Mentors: Anna Neyzberg on Making the Tech Industry Accessible for All

Anna Neyzberg’s first job in the tech industry was not great: women employees weren’t encouraged to speak up, and when they did, management didn’t pay attention. Now a software engineer at Carbon Five, Anna co-founded ElixirBridge in 2016 to teach people from underrepresented groups how to code. In our final post in a series celebrating …